3-Day fMRI Guided TMS Treatment Shows Results for Chronic Post-Concussion Syndrome

Cognitive FX pioneers accelerated TMS protocol that reduces concussion symptoms by 63% in just three days

Published peer-reviewed research shows that Cognitive FX treatment leads to meaningful symptom reduction in post-concussion symptoms for 77% of study participants. Cognitive FX is the only PCS clinic with third-party validated treatment outcomes.

READ FULL STUDY

The Hariri lab at Duke University recently published a review that questions the reliability of task-based fMRI as used to examine individual patients.

At Cognitive FX, we’ve used fMRI images for years to determine which of our patients’ brain regions were affected by traumatic brain injury and then tailor their treatment accordingly. We’ve also published multiple peer-reviewed papers showing how fMRI can help in assessing long-term symptoms from concussions and other TBI.

So, clearly, we have a vested interest in the topic of task-based fMRI — and the expertise to understand the concerns raised by Hariri and his team. A number of our patients have reached out to us, hoping to make sense of the findings. Here’s the short answer:

While we differ on some points with them, they bring up valid concerns with the way fMRI has been applied in certain studies. Namely, they assert that diagnosis of an individual patient’s condition using fMRI cannot be done accurately without certain statistical practices and other specific measures. They take issue with studies that have published results without taking those important measures. Fortunately, we do not make the mistakes they outlined.

Instead, we very carefully use whole-brain signatures (something the Duke study recommends) in a process that results in high test-retest reliability on a patient-by-patient basis. Our average ICC, a measure of reliability explained below, is 0.87, well above the 0.5 ICC benchmark for reliability stated in the Duke study. In addition, we’ve hand-crafted the tests we use for consistency, and we’ve imaged over 5,000 people in the course of our work. In other words, the neuroimaging we do with our patients is meaningful and valid.

Overall, the findings in the Duke study do not invalidate the use of fMRI in general (as some sensational headlines have falsely claimed). They only emphasize the importance of doing fMRI correctly, which we agree with and do ourselves.

That’s the short answer. If you’d like the long answer, we cover it in detail in this post, specifically:

You can use the hyperlinks above to skip to the sections that interest you.

If you suffer from persistent symptoms after sustaining a head injury, we can help. 95% of our patients show statistically verified restoration of brain function after treatment. Contact our team for a consultation.

Note: Any data relating to brain function mentioned in this post is from our first generation fNCI scans. Gen 1 scans compared activation in various regions of the brain with a control database of healthy brains. Our clinic is now rolling out second-generation fNCI which looks both at the activation of individual brain regions and at the connections between brain regions. Results are interpreted and reported differently for Gen 2 than for Gen 1; reports will not look the same if you come into the clinic for treatment.

fMRI scans (functional magnetic resonance imaging) use radio waves and magnets to look at blood flow through soft tissues. The magnetic field from an fMRI can very precisely magnetize the desired tissue such that, when a radio wave passes through them, that wave is altered. The fMRI measures the changes in those radio waves, so if blood oxygenation levels change in a region (signaling increased activity), the fMRI will show the change (known as a BOLD signal). We can then translate this into a 3D map of the observed tissue that shows where blood flow, and thus brain activity, is and is not happening when a patient performs a task.

In contrast, a structural MRI can generate an image of brain structure. It can answer questions like, “Are all the physical parts of the brain accounted for?” and “What condition are they in?”

Combine the information from a structural MRI and superimpose data from an fMRI, and you have a very precise record of how blood moves through the brain in response to a specific task.

Nevertheless, scientists have to be very careful about how they interpret data. Look no further than a dead salmon for a lesson in using statistics correctly — when the authors failed to use the right statistical practices, they detected a BOLD signal (indicating brain activity) in the dead salmon.

So it’s important to take the correct steps to avoid false positive results. But not everyone doing fMRI knows how to do that, and it’s easy to impose your own preferred narrative onto fMRI results if you’re not designing and interpreting the research very carefully.

And that leads us to the Hariri study.

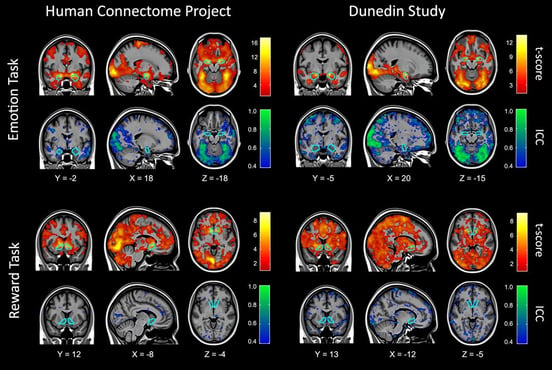

Figure 4 from the Duke paper showing reliability.

Figure 4 from the Duke paper showing reliability.

“It is not the tool that is problematic,” the authors write, “but rather the strategy of adopting tasks developed for experimental cognitive neuroscience that appear to be poorly suited for reliably measuring differences in brain activation between people.” In other words, fMRI, in and of itself, is an excellent tool. It’s how scientists use that tool and interpret their data that can go awry.

The central topic of the journal article is something called test-retest reliability. The concept is fairly simple: if you put someone in an fMRI machine two different times but have them complete the exact same task, you should see the same results both times. And if you put different people in an fMRI machine and have them complete the same task, you should see roughly the same patterns in order to draw conclusions about that brain process. A test with that reproducibility would be considered reliable.

But when members of Hariri’s lab analyzed fMRI data from different published studies, they found that task-based MRI — putting someone in the MRI scanner and having them complete a specific task, like viewing pictures of someone’s face — had extremely low test-retest reliability.

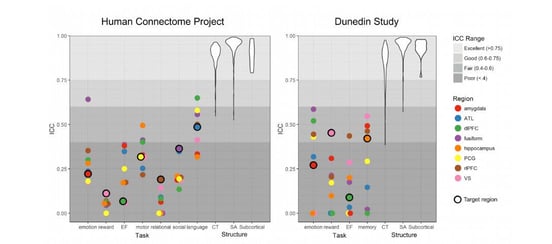

Their primary (but not only) metric for evaluating the reliability of these results was a statistical measurement called the intraclass coefficient (ICC). A good ICC is above 0.5 (the closer to 1, the better), and a bad ICC is lower. They define an “Excellent” ICC range as >0.75. (Our ICCs average to 0.87, well into this excellent range.)

Figure 5 from the Duke study. Most of the ICC’s they reported were below 0.5.

Because they found so many low ICCs in fMRI studies, the paper authors concluded that “task-fMRI measures are not currently suitable for brain biomarker discovery or individual differences research.” Their definition of a biomarker is “a biological indicator often used for risk stratification, diagnosis, prognosis and evaluation of treatment response.”

In other words, they do not think you can look at the human brain in a functional MRI machine and use the results to determine whether or not the patient has a specific condition.

On the other hand, they do believe that studies establishing trends on a population level are still perfectly valid. You can look at a group of people and determine that having them find the numbers 1-20 as fast as they can involves the thalamus, for example, but you couldn’t look at an individual and evaluate how well their thalamus is functioning — at least, not with currently accepted practices, according to the paper authors.

And that leads us to the core issue when it comes to diagnosing post-concussion syndrome. Every day, we look at fMRI scans and tell patients which brain regions are experiencing dysfunction due to a brain injury. Isn’t that exactly the type of determination the Duke paper calls into question?

To understand why we can make those determinations requires both a closer look at what the Duke paper is saying and a better understanding of what we do when we image each patient.

If you want to do functional MRI on an individual level the right way, you need to spend time in two key areas:

To obtain trustworthy data, you need to have excellent tasks and analysis. To that end, the Hariri group makes a few recommendations in their paper:

The key points are (1) and (4), because that’s where designing the tasks and analyzing the results correctly happens. If you do those, you need fewer people to get good results (although, for the record, we always ensure statistically relevant sizes for all of our work). Since Cognitive FX’s founding, we’ve collected images from over 5,000 patients at our clinic!

One of the criticisms leveled against fMRI practitioners is that many focused on specific regions of the brain in their analysis without taking into account what’s happening throughout the brain (the whole brain signatures mentioned above).

We already knew it’s absolutely necessary to take whole brain signatures into account. Here’s a simplified sketch of how we, at CognitiveFX, look at fMRI data:

Now the main thing investigated by the Duke study was test-retest reliability of Person A to Person B. But that’s not the same thing as comparing Person A’s results with Person A’s results weeks or months later.

And as it turns out, when you anchor your statistical analysis of a person’s fMRI scans to the entirety of their own brain, you get much more consistent results for that person if they’re rescanned. Relativizing a person’s scans to their whole brain means you can take into account fluctuations in the brain that would otherwise skew your data. So that’s what we’ve been doing in our research for over a decade.

In 2003, before we ever set our sights on concussion detection, we set out to establish a brain imaging protocol for fMRI that would ensure test-retest reliability. One thing led to another, and the tests we developed were perfect for detecting post-concussion brain dysfunction.

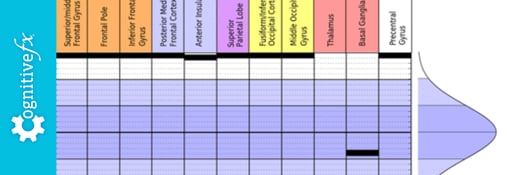

So, in addition to having excellent test-retest reliability as discussed above, we’re also not using the off-the-shelf test protocols that the Duke study criticizes. Instead, we built six test tasks with the goal of reproducibility in mind. You don’t want to use tasks that allow the brain to switch strategies mid-way through.

We created tasks that:

The reason “off the shelf” tasks don’t work well for studying individuals is that they weren’t designed for it. If you’re a scientist studying which regions of the brain activate during risk-taking, you don’t have to use something that precise. You can give each person a gambling task and then average the data across a population of participants to get the information you need. It’s sufficient for identifying trends in populations; it’s not sufficient for examining one person’s brain with an eye to diagnosis.

When you’re designing for individual evaluation, you have to standardize the task as much as possible. For example, if you give someone a task to find as many words as they can that start with a certain letter, you need to pick a set of letters with roughly the same number of words in the language you’re using. If you’re asking someone to memorize a list of words, then your lists need to have words that are of similar length, difficulty, and diversity.

That attention to detail is what separates the tasks we design specifically for use in diagnosing concussion versus off-the-shelf tasks that neuroscientists often turn to out of complacency.

Using the functional imaging methods we outlined above, we’re able to clearly see when someone’s brain deviates from the norm. We can look at specific brain areas — such as the thalamus, basal ganglia, or anterior insula — and tell whether they are hypo- or hyperactive compared to uninjured brains.

And when we do so, it gives us valuable information that lets us tailor treatment to the needs of each patient.

Notably, the authors of the Duke paper do not take issue with using a BOLD (blood oxygenation level dependent) signal as indication of neural activity or brain function. But others have shown more skepticism on the matter.

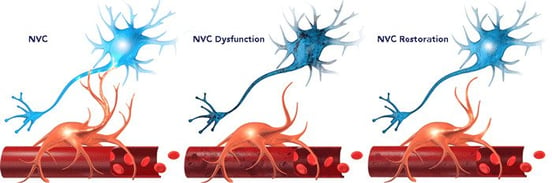

To recap: Blood flow isn't the same as brain activity. The actual activity is in whatever messaging is happening neuron-to-neuron, which we can't measure directly in a living person (with the exception being EEG, but EEG cannot look closely at specific brain regions). So most scientists take increased blood flow as evidence that the brain is doing work.

If the distinction matters to you, you may be relieved to know we don’t use the BOLD signal as a substitute for brain activity. We’re using it for what it is: a direct measurement of blood flow in the brain, which we can use in the diagnosis process.

That’s because concussion symptoms stem from dysfunctional neurovascular coupling. Neurovascular coupling is the relationship between neurons and the blood vessels that supply them. Concussions can disrupt the pathways and signaling patterns that result in healthy resource allocation during task completion.

One area of the brain may be unable to get the resources it needs to complete a task (hypoactive); another might complete the task, but use more than its fair share of the brain’s resources to do so (hyperactive). Either way, the brain’s inherent neuroplasticity is needed to correct the issue. Our treatment methods are based on engaging that neuroplasticity.

We do have some methodological disputes with the Duke paper (which we don’t need to discuss here), but we ultimately agree with their message. Imaging techniques must produce reliable, reproducible results in order to be used clinically.

Fortunately, by using good statistical methods and designing appropriate tasks, fMRI can be used to examine individual differences and diagnose conditions such as post-concussion syndrome. We’re doing it every day at our clinic in Provo, Utah.

If you suffer from persistent symptoms after sustaining a head injury, we can help. 95% of our patients show statistically verified restoration of brain function after treatment. Contact our team for a consultation.

Dr. Mark D. Allen holds a Ph.D. in Cognitive Science from Johns Hopkins University and received post-doctoral training in Cognitive Neuroscience and Functional Neuroimaging at the University of Washington. As a co-founder of Cognitive Fx, he played a pivotal role in establishing the unique and exceptional treatment approach. Dr. Allen is renowned for his pioneering work in adapting fMRI for clinical use. His contributions encompass neuroimaging biomarkers development for post-concussion diagnosis and innovative research into the pathophysiology of chronic post-concussion symptoms. He's conducted over 10,000 individualized fMRI patient assessments and crafted a high-intensity interval training program for neuronal and cerebrovascular recovery. Dr. Allen has also co-engineered a machine learning-based neuroanatomical discovery tool and advanced fMRI analysis techniques, ensuring more reliable analysis for concussion patients.

Cognitive FX pioneers accelerated TMS protocol that reduces concussion symptoms by 63% in just three days

fMRI (functional Magnetic Resonance Imaging) is often a more sensitive method for detecting brain injury such as concussion than a standard MRI (Magnetic Resonance Imaging) brain scan. fNCI...

If you’re struggling to recover after a brain injury, dealing with healthcare providers is often a frustrating process. Unless you have a clear, severe injury, they might be dismissive of your...

A large real-world study demonstrates that using fMRI brain scans to personalize TMS targeting will significantly improve outcomes for treatment-resistant depression—but the treatment works well...

You've done everything your doctor told you to do. You rested in a dark room for weeks. You took the medications. You went to physical therapy. You've seen three different neurologists. Your CT scan...

How long the effects of TMS last — what researchers call its "durability," meaning how long the benefits of TMS persist after treatment ends — isn't a single statistic. It depends significantly on...

Published peer-reviewed research shows that Cognitive FX treatment leads to meaningful symptom reduction in post-concussion symptoms for 77% of study participants. Cognitive FX is the only PCS clinic with third-party validated treatment outcomes.

READ FULL STUDY